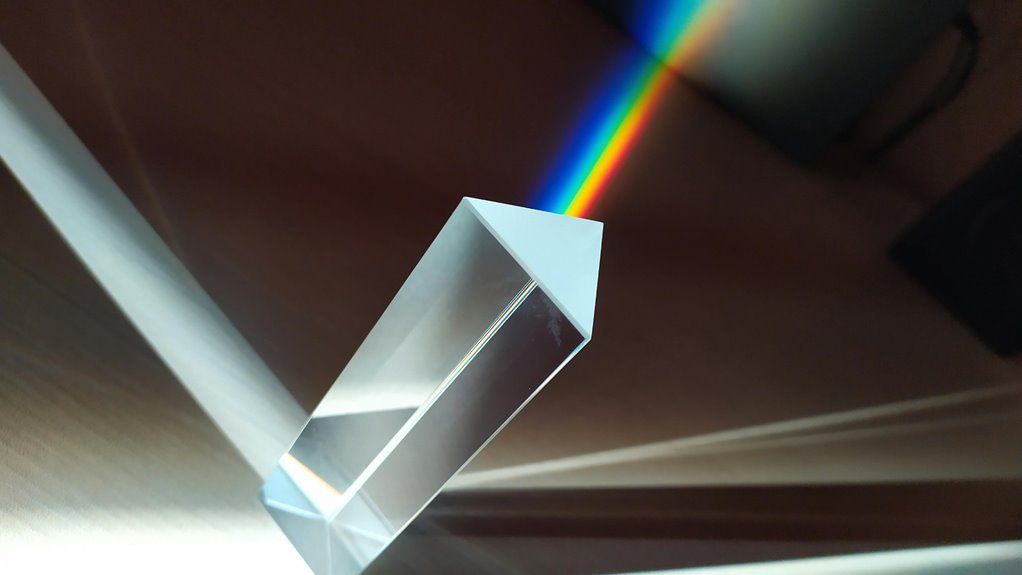

The Neural Prism 3157080190 Apex Node represents a modular edge AI unit designed for low-latency, high-fidelity processing. Its neuromorphic cores enable spike-timed computations and energy-efficient parallelism, while deterministic edge resources support scalable orchestration and strong governance. The architecture emphasizes fault tolerance, autonomous workflows, and measurable QoS. Benchmarks and real-world deployments will determine the practicality of its deployment across diverse edge networks, raising questions about integration, security, and governance at scale.

What Is the Neural Prism Apex Node and Why It Matters

The Neural Prism Apex Node represents a modular processing unit designed to perform high-fidelity neural computations within a broader AI architecture. It enables precise throughput and scalable orchestration.

Neural Prism emphasizes modularity; Apex Node enables edge deployment with low latency.

Enterprise integration ensures interoperability, governance, and security, supporting autonomous workflows while maintaining controllable abstraction and rigorous performance benchmarks.

Architecture Highlights: Neuromorphic Cores, Real-Time Processing, and Fault Tolerance

Neuromorphic cores initialize a hardware-efficient foundation for the Apex Node, enabling spike-timed computations and energy-proportive parallelism that align with real-time demands.

The architecture supports neural prism-inspired processing, distributing workloads across edge computing resources while maintaining deterministic timing.

Fault tolerance emerges through modular cores and rapid reconfiguration, ensuring robust apex node operations under variable conditions and real-time processing requirements.

Performance Benchmarks and Real-World Use Cases

What measurable gains accompany the Neural Prism 3157080190 Apex Node in real-world deployments, and how do these benchmarks translate to operational efficiency across edge networks? The analysis emphasizes neural benchmarking metrics, latency reduction, and throughput stability observed in diverse edge deployment scenarios. Results indicate consistent performance gains, scalable across nodes, enabling optimized resource utilization, autonomous orchestration, and predictable QoS for latency-sensitive applications.

How to Evaluate and Deploy the Apex Node in Your Edge/Enterprise Stack

Evaluating and deploying the Apex Node within edge and enterprise stacks requires a structured approach: establish objective benchmarks, map integration points, and validate operational constraints before rollout.

The analysis emphasizes deployability considerations and security implications, weighing interoperability, latency budgets, and governance.

Adoption decisions hinge on repeatable test suites, rollback plans, and clear responsibility boundaries, ensuring scalable integration without compromising resilience or compliance.

Conclusion

In sum, the Neural Prism 3157080190 Apex Node stands as a paragon of edge determinism: a silicon oracle that pretends to be tireless, fault-tolerant, and endlessly scalable. Its neuromorphic cores whisper in spike-timed efficiency while stakeholders bask in the glow of benchmarks and governance banners. Yet beneath the sheen lies the bureaucratic fever dream of universal QoS—where every latency sigh is measured, and every fault is politely apologized for with a firmware update. Satire aside, it delivers prudent, technical edge mastery.